Anthropic Rejected The Pentagon's Surveillance Push - And The Fallout Could Be Massive

The fight over the future of AI is in the midst of its most important battle yet, as Anthropic continues its legal struggle against the Pentagon over its supply chain risk designation earlier this month. The standoff, sparked by the Defense Departments' use of Anthropic's Claude in the January 2026 capture of Venezuelan President Nicolas Maduro, saw the startup attempt to impose its safety guidelines prohibiting violence on the U.S. military.

Weeks of tense negotiations followed, in which company and military brass jousted over whether private firms can dictate how the government deploys their products. Ultimately, Anthropic rejected the Pentagon's demands, claiming the administration wanted to add provisions in its contract enabling mass domestic surveillance and fully autonomous weapons. The decision elicited a firestorm from the White House, culminating in a first-of-its-kind supply chain risk designation. Silicon Valley, meanwhile, has both rallied behind, and undermined, their banished competitor, with employees publicly lobbying against the designation as company executives push to fill the military's Claude-shaped void.

The potential ramifications range from the painstakingly bureaucratic to the nightmarishly dystopian. On its surface, the feud exemplifies the transformative nature of artificial intelligence. As the technology revolutionizes our understanding of warfare, governments, companies, and civilians alike must deal with the consequences. More broadly, however, it's a story whose only true parallel might be found in the nuclear arms race. The Promethean dilemma at the center of this wave of advanced technology strikes at the heart of the most important issues facing liberal democracies today.

Reevaluating Claude

At the time of its ouster, Claude was the only AI authorized to handle classified information. Claude existed above the military's software in controlled areas of Amazon's Top Secret Cloud environment. Pentagon personnel accessed the AI through interfaces designed by big-data-turned surveillance giant Palantir technologies like Project Maven, the company's controversial battlefield management tool. Pentagon personnel largely use Claude to sort massive troves of unparsed intelligence. According to CEO Dario Amodei, the Pentagon has relied on Claude for "intelligence analysis, modeling and simulation, operational planning, cyber operations, and more." These tools received a major endorsement last year, when the Pentagon struck a $200 million deal with Anthropic to pioneer new national security applications for its frontier AI system.

The controversy began when an Anthropic employee asked a senior executive at Palantir about their platform's role in the Maduro operation (via Semafor). Reportedly, the Pentagon's senior leadership took offense to this, with one official telling Axios they would "reevaluate" companies that "jeopardize the operational success of our warfighters." According to The New York Times, the event escalated a months-long struggle over the Pentagon's use of Claude.

Meanwhile, Anthropic stated that negotiations broke down over its safety guardrails prohibiting mass domestic surveillance and fully autonomous weapons. The Pentagon, meanwhile, countered that it should be able to utilize the technology in any lawful manner it sees fit, an argument Amodei has criticized given lagging congressional oversight.

Everything is politics

The debate is indicative of a broader political schism in the AI industry, in which Anthropic's safety-first approach clashes with its competitors' move-fast-and-break-things attitudes. In fact, the company's existence is rooted in this political rift, when its sibling-founders left leadership positions at OpenAI to form a safety-minded competitor. Since then, Anthropic has billed itself as an ethical alternative to its grow-at-all-costs counterparts, instituting a Responsible Scaling Policy promising to never train an AI system without instituting adequate guardrails. As CEO, Dario Amodei has been vocal about AI's dystopic applications, sounding the alarm about the threat AI poses to democratic institutions, writing, "I see no strong reason to believe AI will preferentially or structurally advance democracy and peace."

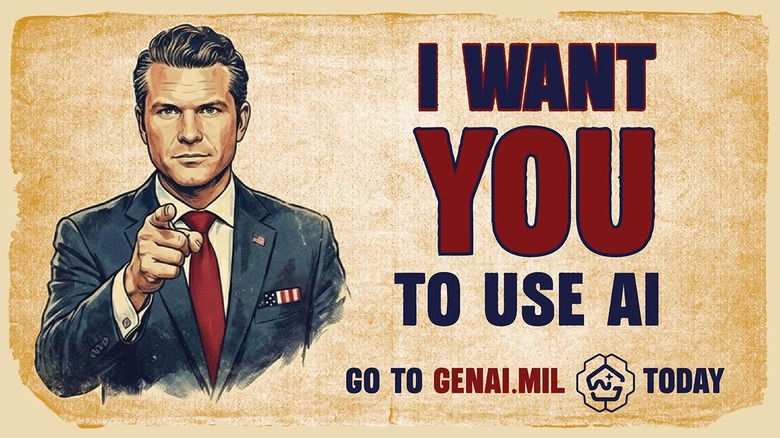

Anthropic's politics consistently clash with the Trump administration's efforts to deregulate the AI space. As negotiations with the Pentagon mounted, Amodei made headlines by establishing a $20 million super-PAC to counteract anti-regulatory efforts ahead of the upcoming midterm elections. By late-February, this stance had rankled leading members of the Defense Department. Secretary Pete Hegseth, for his part, has publicly criticized companies looking to constrain his Department's strategic playbook, saying in a January 2026 speech announcing the Pentagon's deal with xAI that the U.S. "will not employ AI models that won't allow you to fight wars." Meanwhile, Trump's AI czar David Sacks lambasted Anthropic as early as October 2025, calling the company proponents of "Woke AI"(via CNBC).

Anthropic's contract dispute escalated these fraught political divides. During weeks of public sparring, the Pentagon pushed the San Francisco-based company to add qualifying language to its safety guardrails. Anthropic, for its part, spent much of that time appeasing the administration, even renouncing its ethical training pledge in hopes of eliciting concessions on safety constraints.

A doomed deal

One day before Hegseth's deadline, Amodei rejected the deal. Reportedly, it broke down over the Pentagon's mandate for domestic surveillance loopholes, enabling bulk data analysis of American's internet histories, chatbot conversations, GPS-movements, and financial transactions (via The Atlantic). For many observers, the price for noncompliance was on the wall. Prior to the decision, a senior official told Axios "we are going to make sure they pay a price for forcing our hand like this."

Indeed, the administration's response was extraordinarily punitive. On March 5, it made Anthropic the first American company to be labeled a supply chain risk. Traditionally reserved for foreign companies that spark national security concerns, like Chinese tech conglomerate Huawei, the designation prevents any federal partners from doing business with Anthropic.. Although details remain unclear, court documents estimate it could cost Anthropic $5 billion. The designation has caused an uproar across the industry, as AI leaders, industry lobbyists, and Democratic politicians publicly advocate for the spurned giant. While the industry vocally supported Anthropic, its competitors rushed to fill the Claude-shaped void. Within hours of Hegseth's decision, OpenAI announced a deal to replace Anthropic, sparking consternation within the firm. Google and xAI have also moved to handle the Pentagon's classified work.

Rhetoric from both sides only emphasizes the political undercurrents of the disagreement. Following the decision, President Trump declared that the U.S. won't allow "A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!" Furthermore, Hegseth wrote that "Anthropic delivered a master class in arrogance and betrayal . . . cloaked in the sanctimonious rhetoric of 'effective altruism.'" Undersecretary Emil Michael accused Amodei of having a "God-complex." Amodei, meanwhile, reportedly told staffers that the designation was punishment for refusing to offer the President "dictator-style praise."

The fallout

The Pentagon's disavowal of Anthropic is sure to be litigious and logistically complicated. Although agencies are proceeding with ousting Claude from their systems, including the Treasury and State Departments, observers believe it'll take anywhere from six to 18 months to disentangle from Anthropic. Reports within the Pentagon suggest hesitancy to transition from the platform (via Reuters). Palantir, meanwhile, must replace Anthropic within its Maven system, a slow, arduous task requiring it rebuild sections of software. The possibility of a renegotiated deal with Anthropic further complicates the untethering process. To date, Anthropic has both sued the federal government and solicited its return to the negotiating table as investors push for de-escalation.

Nevertheless, the administration continues to plow ahead with the transition despite relying on Anthropic to orchestrate its airstrikes in Iran. According to Bloomberg, Pentagon officials will continue to use Claude in the conflict for at least another month. Rarely do we see the consequences of policy debates erected in real time. The Iran backdrop illuminates both the military's growing dependence on AI systems and the urgency of Anthropic's moralistic crusade.

Fundamentally, both sides' arguments are best understood as attempts to exert control in a paradigm of uncertainty. As Ashely Deeks describes in her book "The Double Black Box," AI presents a unique challenge for democratic governments and the companies servicing them. For the Pentagon, it must confront a world in which it possesses limited developmental control over, or deep understanding of, the technologies it looks to deploy. Anthropic meanwhile, has limited agency over or insight into the military's ultimate uses of its algorithm.

Anthropic's Promethean moment

Anthropic's rationale begs to be understood within the context of the roaring AI race, reflecting what its constitution calls "a calculated bet." In an argument that recalls those advocating for nuclear proliferation, Anthropic claims "it's better to have safety-focused labs" at the center of "one of the most world-altering and potentially dangerous technologies in human history," than the alternative. To the company's credit, OpenAI's willingness to fill Claude's shoes, presumably with softer safety constraints, somewhat attests to this logic. However, given its decision to suspend its safety pledge, it's reasonable to question Anthropic's safety commitments.

One thing's certain, however: Anthropic doesn't just want to have its cake and eat it, it also doesn't want to stomach the caloric consequences. But Anthropic cannot absolve itself of Claude's adverse ramifications. Nowhere is this clearer than Amodei's statement elucidating his company's conflict with the Pentagon, in which he clarifies that Anthropic's moral stand isn't whether AI should be used for mass surveillance or fully autonomous weapons, but where and when. The contradictions of this argument extend beyond Amodei's spat with Secretary Hegseth, however, and are evidenced by Anthropic's longstanding partnership with Palantir — the firm developing the domestic surveillance tools it purports to abhor.

Therefore, this begs a broader existential question: can AI be built safely? Despite its claims to the contrary, Anthropic's actions point to an inherent flaw in its mission, namely, that such commitments matter little when "safety" is treated as a relative, malleable standard. What the Anthropic saga ultimately proves is the difficulty of drawing coherent lines in the sand when wading through the muddy waters of autonomous surveillance and warfare. Unfortunately, it may also mean that AI's path towards profitability inevitably runs through the gates of authoritarianism. Prometheus would be proud.