Google's New AI Compression Could Help Lower RAM Prices - Here's How

The world of electronics is a very interesting place right now, mostly due to the ongoing slew of price hikes and changes we're seeing around the industry. Most of these price adjustments are being pushed forward thanks in part to an ongoing chip shortage caused by AI, which is helping to drive up the cost of RAM, in turn making computers cost more. Price increases for gaming consoles, smart TVs, and useful gadgets are also occurring. However, there could be a savior on its way, as Google has released details about a new compression system designed to make AI more efficient at how it manages RAM, thus helping the massive new data centers we're seeing pop up need less of the component.

Matthew Prince, CEO and co-founder of Cloudflare, said that the algorithm is "Google's DeepSeek," on X, no doubt a reference to how DeepSeek AI became popular by drastically improving how large language models are trained and use resources. Seeing this kind of praise for TurboQuant — which is what Google's new efficiency algorithm is being called — is interesting. But there's still two lingering questions here.

Why should consumers care about this advancement, and how exactly is this going to affect RAM prices in the long-term? Well, for starters, it could decrease demand for RAM in datacenters, which might help with overall availability for consumers.

Breaking it down

You probably noticed how we said might back there, and there's a very important reason for that. First, TurboQuant hasn't actually been put into effect just yet. This is ultimately just research that Google has shared information about. While the company says it could improve performance of AI RAM usage, it likely won't make a difference for a while. And even once it reaches data centers, it might not lower the amount of RAM needed.

That's because the current value of the key-value cache — which is used to store memory context so AI doesn't have to recalculate the same things over and over — is a major bottleneck for AI. With increased efficiency, you're able to store more in the KV cache without needing to overwrite it. However, if you continue to add more RAM to the system, then training newer, more powerful models becomes possible.

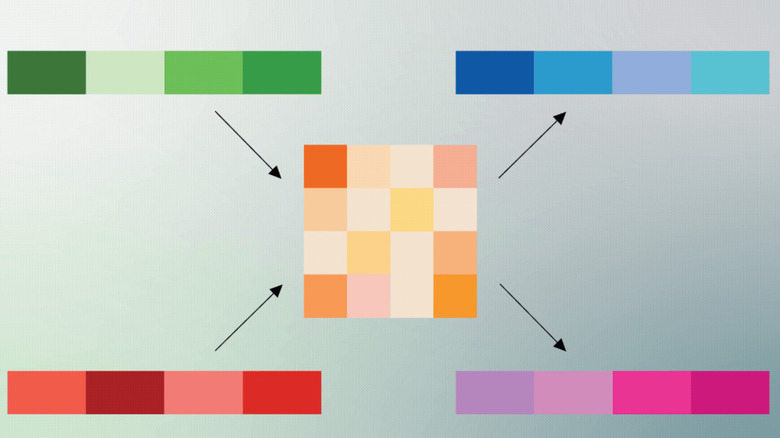

The easiest way to think of it is to imagine that AI models store all the context needed to carry on conversations with users like images in a folder. These images are then stored in the KV cache. However, as more images fill that space, it becomes more difficult for the AI to parse through the information in an efficient way. One way to mitigate this is to continue adding new space to the cache — which is where RAM expansions come into play. How TurboQuant works, is it basically takes these images and compresses them into smaller sizes and gives them easier-to-follow categorization, so that it can hold and process more. There's a lot more involved than that, obviously, but the basic idea should be similar based on how Google explains it.

The hard truth

Ultimately, this means that the demand for RAM in data centers causing the price surge might go down slightly, which could lower prices. However, there's no guarantee this will happen, especially with so many companies pushing for newer, stronger models to be created, and with all the new AI features releasing across various platforms.

Google, OpenAI, and plenty of others are continually developing their AI tools and launching new and improved models. Because of that, the size of the KV-cache needed to keep things operating smoothly for the hundreds of thousands of people that might use AI even once a day is going to keep growing.

Still, seeing Google's new algorithm offering a bit of possible relief is welcoming. Hopefully, as AI companies continue to innovate, we'll see other changes and updates that make RAM less of a priority for AI. For now, though, it's difficult to see this as any kind of permanent solution to the problem, especially when supply and demand are already so skewed that we're seeing widespread shortages.