5 Ways Stephen Hawking Predicted The End Of The World Would Happen

Primarily known for his work on black holes, scientist Stephen Hawking had opinions on many other topics besides crazy complicated space stuff. Unfortunately for us, many of his comments came in the form of cautionary advice and warnings related to the end of the world.

While he was confident that we have what it takes to overcome various dangers (primarily by colonizing other planets), he believed we need to be very careful for the next century or so until colonization efforts become possible. With the entirety of our species on one planet, all it takes is one asteroid, one devastating disease, or one nuclear war to wipe us all out.

To keep ourselves safe from the various threats we face now and those we could face in the future, we first need to be aware of them. That's why Hawking spent so much time thinking about all of the ways the human race could end, and why he chose to share his thoughts. Here are some of the world-ending scenarios he envisioned.

1. Global warming

Starting with the most obvious and most pressing issue, Stephen Hawking was very concerned about global warming and the sluggish speed at which we're combating it. In 2017, he spoke out about U.S. President Donald Trump's decision to pull out of the Paris climate agreement, saying it will "cause avoidable environmental damage to our beautiful planet."

While criticisms of the Paris agreement exist and some data suggests it won't do enough to keep global temperatures below the target, this isn't necessarily relevant to Hawking's argument. The most important part of the agreement isn't its current details and goals, but the participation of almost all the nations in the world. When the world's second biggest greenhouse emitter pulls out of the deal, it not only slows down our current efforts, but also puts future global efforts at risk. Global unity is a very precarious thing.

Unfortunately, Hawking's comments on this subject are still relevant since President Trump had taken the U.S. out of the deal again by January 2026. Hawking also believed that we are "close to the tipping point where global warming becomes irreversible," and painted a terrible image of a future Earth with a Venus-like climate of more than 400 degrees Fahrenheit with sulfuric acid rain. Some scientists call this hyperbole, however, and argue that the Earth's climate would never go in the direction of Venus. Though, since it would go in a not-suitable-for-humans direction either way, it's debatable how much that distinction matters to the discussion.

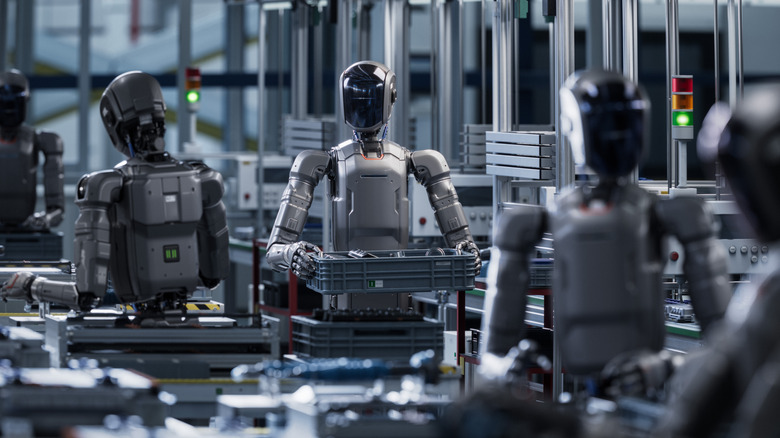

2. AI taking over

Like many of us, Hawking was somewhat undecided on AI. He said "the advent of superintelligent AI would be either the best or the worst thing ever to happen to humanity," and warned of the dangers of misalignment: "A superintelligent AI will be extremely good at accomplishing its goals, and if those goals aren't aligned with ours we're in trouble."

In other words, even if AI never explicitly turns against us, it may evolve so far beyond humans that it wouldn't consider us important at all. In this situation, we could end up in the same position we put wildlife in today — we (mostly) don't kill them out of malice, but our big, important human projects destroy their homes and make it much harder for them to survive on this planet.

Hawking wasn't around for the release of ChatGPT and the birth of the current AI boom (or AI bubble), but he probably knew it wasn't far off. In 2015, he was one of the experts who signed the "open letter on artificial intelligence," which highlighted both the potential positive and negative effects of AI. The main purpose of the letter was to encourage proper research into AI and AI safety, so we could make systems that maximize the benefits while minimizing the risks. We don't know what Hawking would think of the situation today, but it seems likely that he wouldn't see the mass-commercialization of AI as a promising first step towards safe, sustainable, and useful future systems.

3. Genetic engineering

Hawking also touched on the subject of genetic engineering in his essays, predicting that we would "discover how to modify both intelligence and instincts such as aggression" within the current century. Though laws to control such procedures would surely be made, Hawking anticipated that the wealthy would still find a way to genetically improve themselves and their children, which would ultimately bring about a new race of superhumans.

We don't really even need Hawking to tell us what would come next; we've seen it in countless sci-fi stories — the improved humans and unimproved humans would be at each other's throats in no time! Maybe we'd wipe each other out, maybe one side would prevail, or maybe a different world-ending scenario would occur while we were distracted by the conflict.

Our own bodies aren't the only thing we might genetically engineer, however. Hawking also mentioned genetically engineered viruses as a potential threat. Right now, research in this area is, of course, aimed at doing good by fighting cancers and other diseases, but the more prevalent a technology becomes, the more varied its applications become. We don't know who or why, but if it's possible to genetically engineer a deadly, contagious virus, there's a scarily significant chance that someone will do it.

4. Aliens messing us up

Hawking didn't advocate for or against the existence of other intelligent life in the universe, instead pondering scenarios that covered both possibilities. Other life could be too far away to reach us, or it may be that even if there are organisms out there, intelligence isn't common, and we're the first to end up with it.

Despite his uncertainty, however, he was concerned about humanity actively trying to contact extraterrestrial life. If there is someone out there, we have no idea what they might be like, and Hawking argued that we could be inviting trouble by sending out signals. Any species capable of contacting or reaching Earth would surely be far more advanced than us, and he worried that conquering and colonizing would be very likely motivations for any far-traveling species.

Still, it seems he was just as curious as the rest of us, and in 2015, he was involved in the launch of a new search project called Breakthrough Listen. This project, unlike others, aimed to search for life through listening only and would avoid sending messages and signals. This distinction was important for Hawking, and at a press conference for the initiative, he again voiced his concerns on the subject, telling reporters that "A civilization reading one of our messages could be billions of years ahead of us. If so, they will be vastly more powerful, and may not see us as any more valuable than we see bacteria."

5. Failing to colonize other planets

You might think that Hawking made too many predictions about how the world will end, but it's important not to put too much stock into any single one of his ideas. It's not as if he meant each new world-ending possibility he spoke of to trump the last one — the point is that there are many ways our species could end, and ignoring that reality will only increase the likelihood of it happening sooner rather than later. But no matter how many world-ending scenarios there are, we can overcome them with just one solution: colonization.

Hawking had many complicated ideas about many complicated things, but his thoughts on colonization are both simple and undeniably true: The further we spread across the universe, the longer we're likely to survive. If we live on one planet, then the fall of just one planet will wipe us out. If we live on 300 planets, then even the fall of 299 planets won't wipe us out. Leaving Earth doesn't have to mean abandoning it, however. Instead, it could give the planet a chance to recover and give us the opportunity to build a more sustainable colony there. Also, since we wouldn't be able to take all of Earth's other species with us, leaving before the planet is truly ruined would give wildlife a much better chance of continuing to survive there.

Hawking believed we would be able to start our search for new homes around 100 years from now. He also thought we needed to be as quick about it as possible, since he believed either a "nuclear confrontation or environmental catastrophe will cripple the Earth at some point in the next 1,000 years."