A Humanoid Robot Learned Expressions From YouTube, And The Results Are Terrifying

Researchers at Columbia University published a study (via Science) in January 2026 that details their methods for getting a humanoid robot to look and sound a lot more like a person. They got it to master facial expressions and moving its lips when communicating by having it watch YouTube videos. The video of the robot itself is unnerving, and you can see it naturally simulating several languages and accents with perfect lip movements.

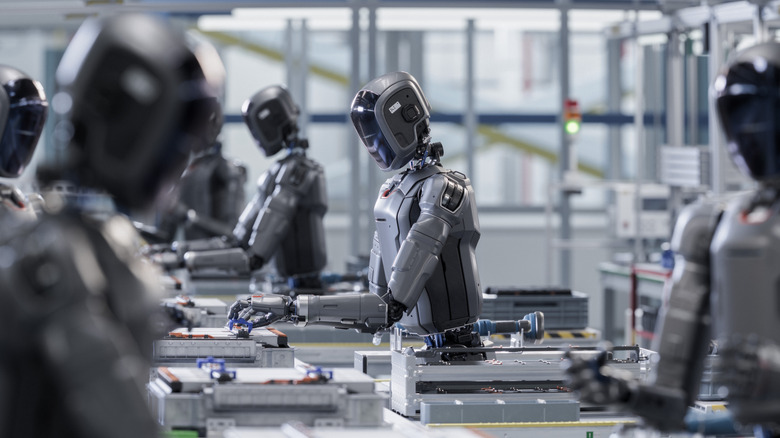

According to a Columbia University article by Hod Lipson, Professor of Innovation in the Department of Mechanical Engineering and director of Columbia's Creative Machines Lab, what sets most humanoid robots apart from a more natural conversation and experience with humans is their lack of lip movements. There have been multiple companies building their own humanoid robots throughout the years, and even though some can be more convincing than others, like XPENG's newest humanoid robots or Neura's options, they still have more of a robotic face than a human one.

The research team behind this project created a humanoid robot with a proper face and 26 facial motors to help it move its lips to simulate speaking. The researchers say that by watching its own reflection in the mirror and watching several hours of YouTube videos, this robot was able to mimic how we speak. Not only that, but this method avoided the "uncanny valley" effect where a robot's actions are similar to a human's, but they're off just enough to evoke a feeling of uneasiness.

Lipsync is the next frontier of humanoid robots

According to Hod Lipson's article, half of a person's attention in a conversation focuses on lip motion. By using the Vision-to-Action (VLA) language model, the humanoid robot learned to simulate how humans speak by first looking at itself in a mirror and then mimicking others through YouTube videos. While the researchers said it had difficulties with sounds like "B" and others that involved lip puckering, such as "W," the results are already impressive.

Yuhang Hu, the study leader of this project, was quoted, saying,"When the lip sync ability is combined with conversational AI such as ChatGPT or Gemini, the effect adds a whole new depth to the connection the robot forms with the human. The more the robot watches humans conversing, the better it will get at imitating the nuanced facial gestures we can emotionally connect with."

The researchers tried several sounds, languages, and contexts. Some of them can be seen on a video posted by the university, which shows the humanoid robot speaking in different languages.

Robotic facial affects are important

While many companies have been perfecting how their humanoid robots walk, hold objects, and perform tasks, Lipson says, "Facial affection is equally important for any robotic application involving human interaction." He believes improving how robots react and interact with the environment will be especially important in areas like entertainment, education, medicine, and elder care. "There is no future where all these humanoid robots don't have a face. And when they finally have a face, they will need to move their eyes and lips properly, or they will forever remain uncanny," Lipson estimates.

While the study was focused on testing these Vision to Action models, it shows that this is yet another frontier to cross for the humanoid robots market, and that soon companies might start making robots that more closely resemble people, and not just machines that can kick their CEOs or show off their martial art skills. Still, Lipson and Hu understand that the market will need to be careful on how it approaches this new venture, as there are several controversies surrounding how robots will and should interact with people.