10 Modern Technologies That Are Older Than You Think

We may receive a commission on purchases made from links.

It's easy to look around at all the technology we have today and feel like it must have been invented just a few years ago. The truth, however, is that all the technology we have is built on centuries of work done by the people who came before. It's true that there's been an acceleration in technological advancement, which seemed to get faster with each of the (to date) four industrial revolutions.

But even some of the technologies you think of as recent and very modern actually have their roots in earlier times than you might expect. Often it's not the technology itself that's recent, but the commercial perfection of it. The technology existed, but it was too expensive to sell to the average person, so the general public just didn't know about it.

There are numerous examples of this happening, but we've picked a few of the most surprising for someone living at the end of the early 21st century. How many of these did you already know were much older than people think?

The internet predates PCs

The internet is quite possibly the largest and most complex machine humanity has ever built. It required cooperation from just about every country in the world, and it's responsible for some of the most important economic, scientific, and social developments in human history. But the internet had to start somewhere, and many people might be surprised to hear that the foundational technology of the internet predates the personal computer by a long time.

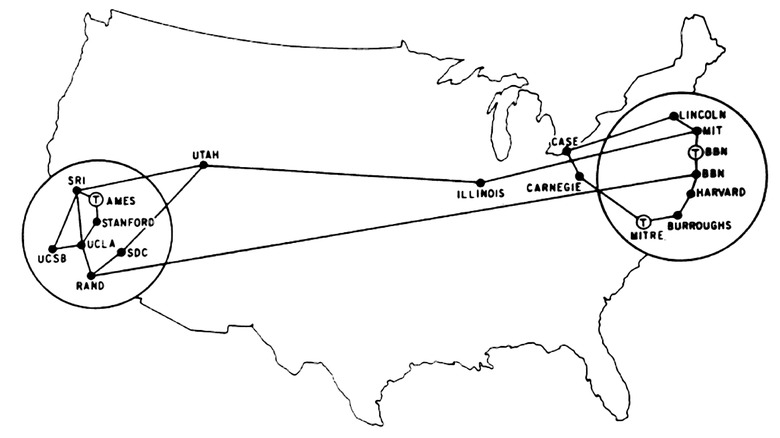

The internet got its start in the 1960s, and that little map above shows us the whole thing. That's ARPANET, a U.S. Department of Defense project to explore the idea of a decentralized network that would keep working if parts of it were knocked out. That's why the internet uses data packets that can be routed until they find a physical path that leads to the correct destination. At first, ARPANET connected major research institutions, but eventually, big businesses got in on the action too.

The personal computer only really became a thing in the '70s, thanks to early hobbyist machines like the first Apple computers. It would take until the late '80s before people would start connecting their "microcomputers" to online bulletin boards and exchange messages and files. Some of these still exist today, and you can visit them to see what the net was like back then. The web we all still use came in the '90s, and some 1990s websites are still alive for you to visit right now!

Touch screens are from the '60s

When the iPhone was born, it was the brave pivot to using a touch screen and nothing else as input that was truly revolutionary. However, multi-touch capacitive touch-screen technology was the real star here. Touch-screen technology itself has existed in some form since its invention in the mid-1960s.

Before modern smartphones, there were personal digital assistants (PDAs) with resistive touch screens, which required a stylus to work well. One of Apple's biggest product disasters of all time was a PDA called the Newton, a device that you could say is a very early ancestor of both the iPhone and iPad. There have been many different approaches to making touch input possible. In 1960, Leon D. Harmon of Bell Labs created the first touch screen, but it could only recognize the touch of a special stylus.

E.A. Johnson of the U.K. Royal Radar Establishment invented a finger-driven capacitive touch screen. It was meant to improve air traffic control, and today we use capacitive touch screens to scroll through fun articles like this one. It's funny to think that capacitive touch screens predate the less intuitive resistive kind. That's the sort of screen you'll find on a Nintendo DS or 3DS, with a slightly squishy surface. We think of capacitive screens as the newer, more high-tech development, but it's actually the other way around. It's just that resistive screens were more practical right up until that first iPhone changed the world.

AI kicked off with World War II

It's hard to say exactly how old artificial intelligence (AI) is because the idea is a pretty old one. There have been fictional stories of automata that could think, and actual clockwork machines capable of computation for centuries. If we're talking about the direct modern start of AI, then the most likely point in history is World War II.

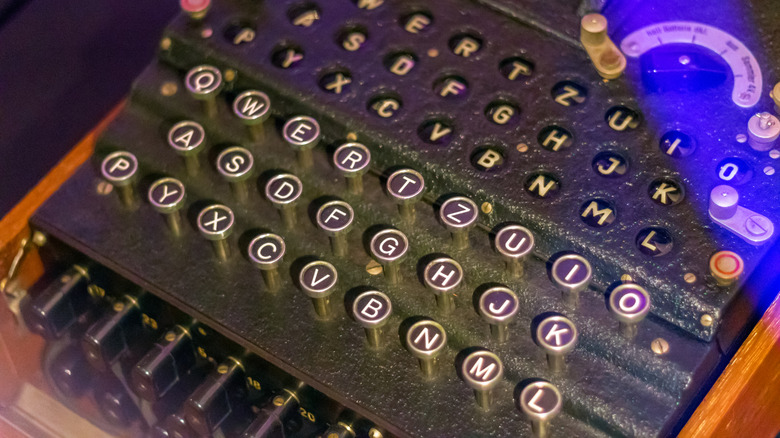

Renowned thinkers like Alan Turing laid the groundwork for modern AI during this time. Turing is of course famous for breaking the German Enigma encryption, but he also wrote several papers on AI and is of course the namesake of the Turing Test. This was an early proposed test for evaluating when an AI had reached human levels of intelligence. Which means that even with the extremely primitive computer technology of the time, scholars of Turing's era foresaw that thinking machines might match and even surpass us one day.

The '50s and '60s were a golden age of rapid AI progress, but software methods and hardware limits prevented further progress, leading to the AI winter that lasted from 1974 to 1987. With rapid computer hardware advancements and developments of technologies like neural nets and machine learning, AI's potential exploded. Today, you can build a personal AI chatbot using a Raspberry Pi that could probably pass the Turing Test. This is something that was still science fiction just a decade ago, but the road here started almost a century ago.

VR is on its third tour (at least)

The excitement around virtual reality seems to have calmed a little in recent years. At one point, modern VR was so hot that Facebook renamed itself to "Meta" to reflect that the whole company was investing hard into the VR-powered "metaverse" as the future. Today, Meta is not shutting down the VR metaverse yet, but the fires have certainly cooled.

The funny thing is that this is VR's third (and so far best) attempt at becoming a thing. While we might argue that virtual reality started off in the form of immersive panoramic paintings, VR as we think of it today got its start in the late 1960s with the invention of the world's first head-mounted display. Called the "Sword of Damocles" and invented by Ivan Sutherland, this massive device and its support structure really must have felt like having a sword hanging over your head.

The first VR push you're most likely to be familiar with happened during the '90s. This was an era where you'd find VR games in the arcades, and some very early, but also very expensive consumer VR products. Unfortunately, the technology just wasn't ready. Neck-busting, bulky headsets and vomit-inducing low-frame-rate graphics are not a good recipe for a VR product. It would take until the 2015 release of the Oculus Rift for the technical issues to be solved once and for all. The waning interest in current VR isn't a technology problem; people just don't seem excited.

Electric cars came before gas

While there's always some measure of dispute when it comes to history, most historians agree that the first proper car using an internal combustion engine was the 1885 vehicle invented by Karl Benz. So you might be surprised to hear that the first cars that rolled around city streets were in fact electric, and date back to the 1830s! These prototypes didn't have rechargeable batteries, but even if we start the clock with the first rechargeable battery vehicles, that takes us to 1859, still decades before that Benz.

These vehicles were quiet and easy to operate. There was also no chance that a hand-crank would break your arm. Sadly, plentiful oil, the mass production of the Ford Model T, and the primitive state of battery technology would put the advent of electric cars that could truly replace combustion engine cars on hold for over a century.

It's easy to think of electric vehicles as a very modern invention, but engineers and scientists have been plugging away at them since before gas burners took the roads. They still aren't close to replacing our gas cars anytime soon, but we would be surprised if electric cars weren't the norm by the end of the 21st century.

Cloud computing came before personal computing

There's probably a lot of computer things you do today that don't actually happen in your computer. Instead, the work happens on a server somewhere in a data center miles away. This is generally referred to as "cloud" computing, and it's an important part of how we use computers today.

Whether you're streaming games from a server or working on documents in Google Docs, you're making use of the cloud. The reason why Chromebooks are so cheap is that these devices were designed to be little more than a doorway to a remote, much more powerful computer.

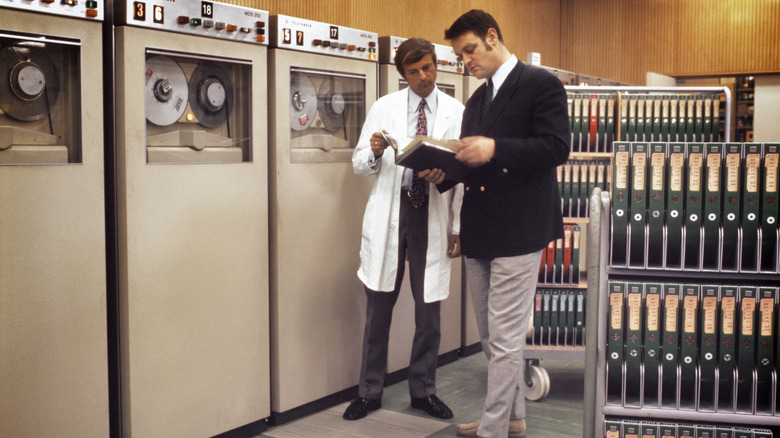

Which is funny because that's how computers started in the first place. Before the advent of personal computers in the 1970s, a computer was a massive machine inside a building, and multiple people would pay to share time on these machines. Today, we have both. You have a computer that can do amazing things offline and on its own, and you have instant access to the most powerful computers in the world, which will do even greater things for a surprisingly small amount of money. But make no mistake, it's the "personal" part of computing that's actually new.

Smart homes are older than the internet

The idea of an automated home that makes your life easier is of course quite old. In Albert Robida's "La vie électrique," we see a future imagined by an artist living in the year 1890, in which home life is made easy through electric gadgets that take care of our every need. In the 1960s, "The Jetsons" showed us a world where robots and other machines did everything from dressing you in the morning to cooking you dinner in the evening. To this day, some people still say new technologies are like "something from 'The Jetsons.'"

But what about actual home automation technology? Can we put a date on that? Yes indeed, that would be 1975 with the invention of the X10 system. This allowed devices to communicate with each other using power wiring in a building or home. By the 2000s, technologies like Z-wave and Zigbee brought about wireless smart home technology more recognizable to us today, but notably, none of these aforementioned technologies required an internet connection to work.

In modern smart homes, most of our smart home gadgets don't think for themselves much and instead rely on cloud computing. So in some ways, not only are smart homes older than you might expect, but they've gotten a little worse too when it comes to actually being smart!

3D printing is from the '80s

Today, we are blessed with many cheap 3D printers, and it's easy to take these mini desktop factories for granted, but the road to making this technology affordable so that anyone can enjoy its benefits has been a long one.

It all started in the 1980s with a technology called "stereolithography." Charles Hull filed a patent for this technique in 1984, and it works by using a UV laser to precisely cure resin. This could be used for rapid prototyping, which made engineering jobs in sectors like aerospace much easier and cheaper. By the late '80s, stereolithography was joined by selective laser sintering (SLS) and fused deposition modeling (FDM). With SLS, you fuse plastic with a laser, rather than curing resin. With FDM, you create an object one layer at a time. Most of the 3D printers people have in their homes today are FDM, although resin printers are also available.

3D printing technology continued to advance throughout the '90s and 2000s, but its cost and complexity kept it firmly in the realm of big business. Then, in the late 2000s, the RepRap project proved that an affordable desktop 3D printer was feasible. Fast-forward to the present day, and you can now buy a multi-material color 3D printer like the Centauri Carbon 2 for under $500, but it took over 40 years to get there!

Email got its start in the '70s

Just like the internet itself, it feels like email is something from the '90s, but that's because the public at large only got on the email train at that time. The technology was actually invented in 1971 by Ray Tomlinson when he sent the first one.

He created email while working on those time-sharing computers we mentioned earlier. It started as a program called SNDMSG, which was designed to send a message to a single person on another computer. However, he soon expanded the concept to sending messages to any networked computer. Combine that with ARPANET, and you get READMAIL, which let those early networked institutions send each other messages with ease. Tomlinson is also the one who came up with using the "@" symbol, which is an iconic part of modern email addresses.

Tomlinson himself admits that his email system isn't strictly the first, so the concept of email is even older. It's just that his solution became the most widely used, largely because the Advanced Research Projects Agency (ARPA), now the Defense Advanced Research Projects Agency (DARPA), mandated that everyone use it. Funnily enough, this humble beginning is probably why standard email is unencrypted plain text. It was designed for communication between a few highly trusted institutions — not the billions of people who use it today — but even so (and with some added security), it seems email will be with us for a long time.

CDs are not a '90s technology

In case you haven't noticed, CDs are actually making a comeback as people realize music streaming isn't all it's cracked up to be. The good news is that, unlike vinyl records, CDs sound amazing and give you all the same dopamine hits of collecting and handling them, but you probably think of these shiny discs as a product of the '90s.

While it is true that CDs hit their peak and dominated vinyl in the '90s, the technology was actually developed in the late '70s. In a collaboration between Sony and Philips, the first CD players landed in the early 1980s. The Sony CDP-101 launched in Japan in 1982, and by 1988, CD sales surpassed vinyl as people embraced these high-fidelity, durable discs. It probably also helped that CDs became portable and playable in cars and with products like the Sony Discman.

CDs also quickly became a dominant data storage format, revolutionizing devices like game consoles and DVDs and Blu-rays were an evolution of CD technology. So even if music CDs became much less popular due to the rise of the iPod and later internet music streaming, this tech never really left us. We wonder what technology someone is working on today will change our lives decades from now? Sadly, it's probably not flying cars.